Whoa!

I was knee-deep in a failing sandwich trade last month and something felt off about the gas math.

Most wallets show raw numbers, not context or failure modes.

My instinct said there had to be a better way to preview outcomes before signing away funds.

After digging through simulations, re-entrancy checks, and a handful of honest developer chats, I started to see a pattern: wallets that simulate every step reduce surprise losses dramatically, though the tooling is not yet perfect and the UX still trips people up.

Seriously?

Yes.

Transaction simulation is not just a convenience feature.

It’s a risk-control layer that should sit between your intent and the blockchain.

On one hand it filters obvious failures; on the other hand it can lull people into false confidence if you treat simulation as prophecy rather than hypothesis.

Hmm…

Here’s the thing.

Simulations model off-chain and mempool conditions that are inherently fuzzy.

They provide a probability-weighted picture, not a deterministic outcome, so you still need mental models about slippage, frontrunning, and miner behavior.

Initially I thought simulations would solve everything, but then realized that good simulations require both quality data and clear user signals, which many wallets don’t surface well.

How simulation-first wallets change the risk math

Okay, so check this out—simulating a transaction gives you three big advantages.

First, you can see failure reasons before signing: insufficient balance, revert errors, or bad pathing.

Second, you can test edge cases like token approvals or extreme slippage without spending real gas.

Third, you get visibility into gas usage and internal calls that commonly hide exploit vectors, though some edge attack patterns will still escape basic sims.

On a practical level, sim results let you triage trades quickly.

A simulation that shows a revert is a red flag; stop there.

A simulation that shows a risky internal call is an amber flag—proceed with caution.

A green simulation still demands context: which relayers or mempool behaviors are assumed, and were oracle oracles tested? (oh, and by the way… oracles can be the weakest link)

I’ll be honest—I’m biased toward wallets that surface this level of detail.

That bias comes from watching people sign dozens of approvals with blind faith.

A wallet that simulates approvals, aggregates calls, and visualizes multi-step outcomes reduces cognitive load.

It makes complex strategies like zap-in or position rebalancing feel less like guesswork and more like engineering, even though markets still bite sometimes.

Something else bugs me about most DeFi interfaces: they hide internal calls.

You click “confirm” and hope the dApp isn’t chaining 12 token transfers and a flash loan behind the scenes.

A simulation-inspecting wallet exposes those calls and labels them, which helps users decide if they want to proceed.

My instinct said that exposure would shift behavior—and data shows fewer reckless approvals when users can see the internal flow.

Where risk assessment usually breaks down

Short answer: assumptions.

Many users assume that a successful simulation equals a safe transaction.

That’s not right.

Simulations rely on node state snapshots and heuristics about mempool ordering; they can miss sandwich attacks or mempool-only adversary logic if the attacker reacts faster than your simulation can predict.

Another failure mode is over-trusting third-party simulators.

If the sim provider has stale price feeds or a misconfigured EVM fork, results lie.

You must cross-check sims against multiple nodes or providers when stakes are high, especially during liquidity crunches.

I started cross-validating simulations across two providers when I moved into higher-value positions—caution paid off, honestly.

Also, user comprehension is the weak link.

A simulation can spit out a stack trace or a weird revert code and users nod and sign anyway.

So UI matters—label the risk, quantify the probability, and offer safer defaults.

For instance: auto-cancel fallback approvals, limit slippage buckets, and require explicit consent for cross-contract calls.

Practical checklist for simulation-driven risk assessment

1) Run the sim and read the failure reason.

2) Check gas and internal calls—do you recognize every hop?

3) Cross-validate price impact using a second provider.

4) Prefer wallets that simulate permit-style approvals to reduce on-chain approvals.

5) Always set conservative slippage and review recipient addresses.

These steps are small, but they make a big difference when something goes sideways.

I’ll add a subtle one: watch for simulation divergence.

If two simulations disagree materially, pause and dig.

One may assume a different oracle or a different liquidity depth than the other.

That’s a sign of market stress or misconfigured tooling.

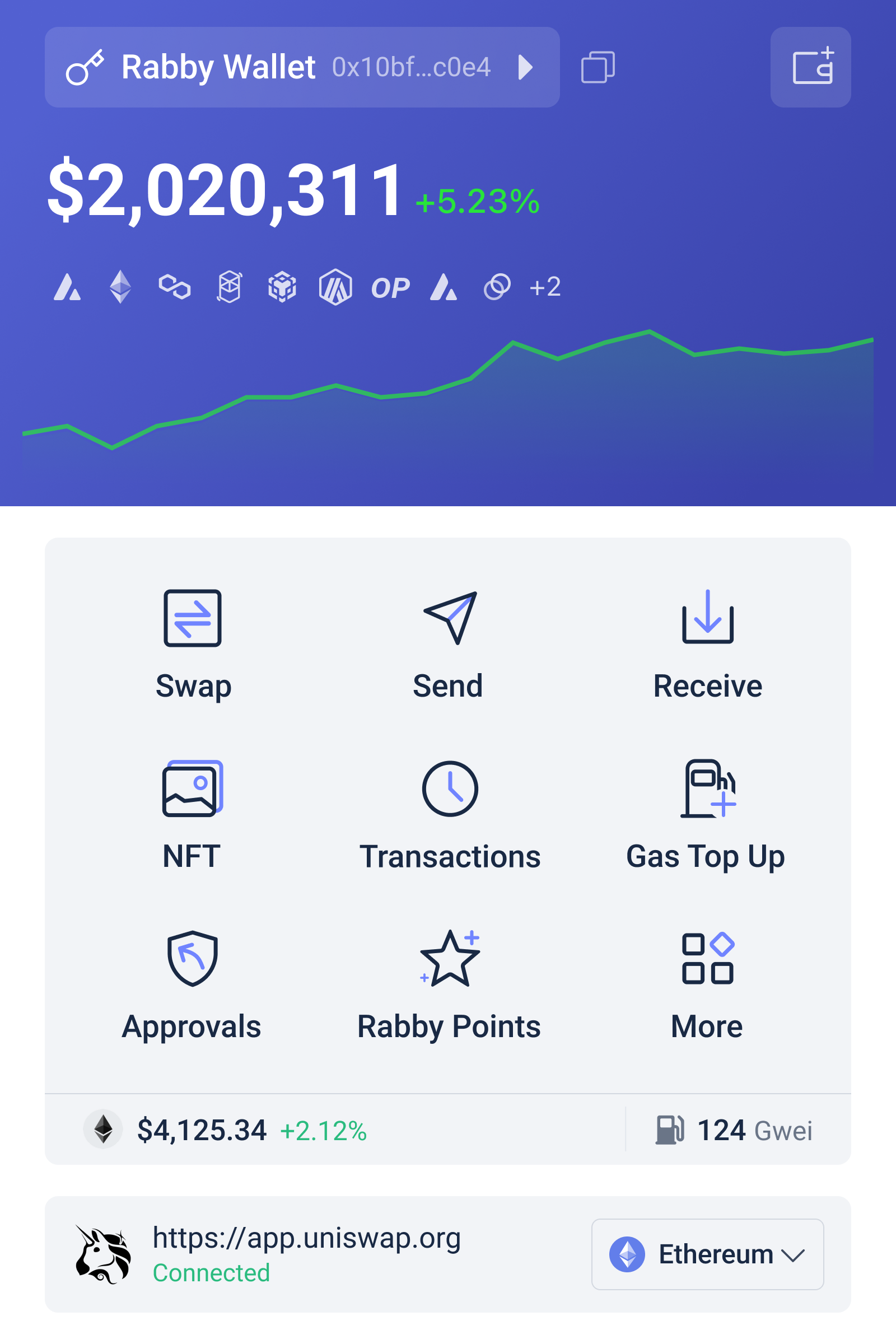

A word on wallet choice and integrations

Wallets that bake simulation into the signing flow push users toward smarter decisions.

I’m not affiliated with every tool out there, but I do prefer interfaces that show a call-by-call preview and explain potential failure modes in plain English.

If you’re trying new wallets, look for those features.

For a modern, simulation-forward experience that I recommend checking out, try here—they surface internal calls and simulate multi-step transactions in a way that feels intentional, not tacked-on.

Seriously—user education plus the right tooling reduces simple losses very fast.

But remember: no tool replaces critical thinking.

Think about incentives: who benefits if this transaction succeeds?

If the answer isn’t you, or it’s unclear, back away.

Common questions about simulation and risk

Can a simulation predict MEV attacks?

Short answer: not perfectly.

Simulations can flag high-friction opportunities where MEV bots usually strike, like large slippage and long execution paths.

They struggle predicting dynamic mempool adversaries that reorder or insert transactions in real time, though improved sims that use mempool watchers are narrowing that gap.

Should I always trust a wallet’s simulation?

No.

Treat simulation output as probabilistic guidance, not absolute truth.

Cross-check when value is material.

Prefer wallets exposing raw calls and failure reasons so you can audit or share them with a third party before proceeding.

Are gas estimations reliable in simulations?

Mostly, but not always.

Simulated gas often underestimates gas used under network stress or if the on-chain state changes between simulation and inclusion.

Add a buffer and consider higher priority fees if rapid inclusion is needed, though that also raises execution cost and MEV exposure.